SALAD-Pan: Sensor-Agnostic Latent Adaptive Diffusion for Pan-Sharpening

1Northwestern Polytechnical University, Xi'an, Shanxi, China

2Nanjing University of Information Science and Technology, Nanjing, Jiangsu, China

3Zhejiang University, Hangzhou, Zhejiang, China

* Corresponding author

Abstract

Recently, diffusion models bring novel insights for Pan-sharpening and notably boost fusion precision. However, most existing models perform diffusion in the pixel space and train distinct models for different multispectral (MS) imagery, suffering from high latency and sensor-specific limitations. In this paper, we present SALAD-Pan, a sensor-agnostic latent space diffusion method for efficient pansharpening. Specifically, SALAD-Pan trains a band-wise single-channel VAE to encode high-resolution multispectral (HRMS) into compact latent representations, supporting MS images with various channel counts and establishing a basis for acceleration. Then spectral physical properties, along with PAN and MS images, are injected into the diffusion backbone through unidirectional and bidirectional interactive control structures respectively, achieving high-precision fusion in the diffusion process. Finally, a lightweight cross-spectral attention module is added to the central layer of diffusion model, reinforcing spectral connections to boost spectral consistency and further elevate fusion precision. Experimental results on GaoFen-2, QuickBird, and WorldView-3 demonstrate that SALAD-Pan outperforms state-of-the-art diffusion-based methods across all three datasets, attains a 2–3$\times$ inference speedup, and exhibits robust zero-shot (cross-sensor) capability.

Keywords: Pan-sharpening, Latent Diffusion, Sensor-Agnostic, Remote Sensing Image Fusion

Methodology

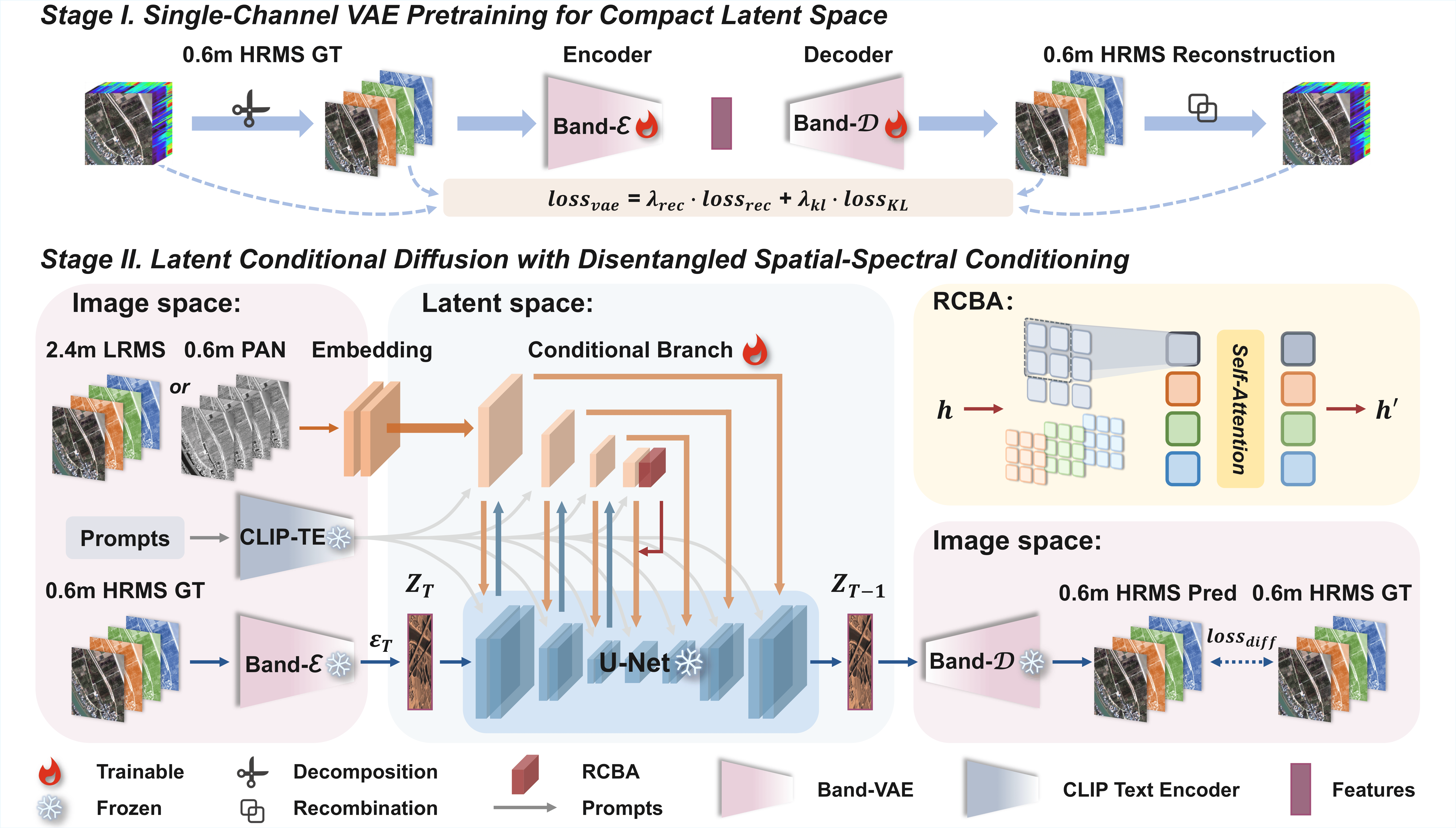

***Framework Overview.*** As illustrated in *Figure 1*, SALAD-Pan employs a two-stage training strategy.

***Stage I: Single-Channel VAE Pretraining.***

We train a band-wise single-channel VAE that encodes each spectral band of HRMS independently into compact latent representations. This band-wise processing strategy naturally supports arbitrary numbers of spectral bands, enabling cross-sensor generalization.

***Stage II: Latent Conditional Diffusion.***

With the VAE encoder frozen, we perform conditional diffusion in the latent space. The diffusion backbone receives disentangled spatial-spectral conditioning from PAN and LRMS images via dual control branches, along with sensor-aware physical metadata via text cross-attention. A lightweight region-based cross-band attention (RCBA) module at the central layer further enhances spectral consistency.

Experimental Results

| Methods | Reduced Resolution | Full Resolution | ||||||

|---|---|---|---|---|---|---|---|---|

| Models | Pub/Year | |||||||

| PanNet | ICCV'17 | 0.891±0.045 | 3.613±0.787 | 2.664±0.347 | 0.943±0.018 | 0.017±0.008 | 0.047±0.014 | 0.937±0.015 |

| FusionNet | TGRS'20 | 0.904±0.092 | 3.324±0.411 | 2.465±0.603 | 0.958±0.023 | 0.024±0.011 | 0.036±0.016 | 0.940±0.019 |

| LAGConv | AAAI'22 | 0.910±0.114 | 3.104±1.119 | 2.300±0.911 | 0.980±0.043 | 0.036±0.009 | 0.032±0.016 | 0.934±0.011 |

| BiMPan | ACMM'23 | 0.915±0.087 | 2.984±0.601 | 2.257±0.552 | 0.984±0.005 | 0.017±0.019 | 0.035±0.015 | 0.949±0.026 |

| ARConv | CVPR'25 | 0.916±0.083 | 2.858±0.590 | 2.117±0.528 | 0.989±0.014 | 0.014±0.006 | 0.030±0.007 | 0.958±0.010 |

| WFANet | AAAI'25 | 0.917±0.088 | 2.855±0.618 | 2.095±0.422 | 0.989±0.011 | 0.012±0.007 | 0.031±0.009 | 0.957±0.010 |

| PanDiff | TGRS'23 | 0.898±0.090 | 3.297±0.235 | 2.467±0.166 | 0.980±0.019 | 0.027±0.108 | 0.054±0.047 | 0.920±0.077 |

| SSDiff | NeurIPS'24 | 0.915±0.086 | 2.843±0.529 | 2.106±0.416 | 0.986±0.004 | 0.013±0.005 | 0.031±0.003 | 0.956±0.010 |

| SGDiff | CVPR'25 | 0.921±0.082 | 2.771±0.511 | 2.044±0.449 | 0.987±0.009 | 0.012±0.005 | 0.027±0.003 | 0.960±0.006 |

| SALAD-PAN | 0.924±0.064 | 2.689±0.135 | 1.839±0.211 | 0.989±0.007 | 0.010±0.008 | 0.021±0.004 | 0.965±0.007 | |

Best results are in bold. Second-best results are underlined.

Visualizations

PAN

SALAD-Pan

LRMS

SALAD-Pan

1 / 20

BibTeX

@misc{li2026_saladpan,

title={SALAD-Pan: Sensor-Agnostic Latent Adaptive Diffusion for Pan-Sharpening},

author={Junjie Li and Congyang Ou and Haokui Zhang and Guoting Wei and Shengqin Jiang and Ying Li and Chunhua Shen},

year={2026},

eprint={2602.04473},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2602.04473},

}